Texture-changing skin lets robot express its feelings

Cornell University engineers have made a robot that gets goosebumps or becomes spiky to communicate its internal state.

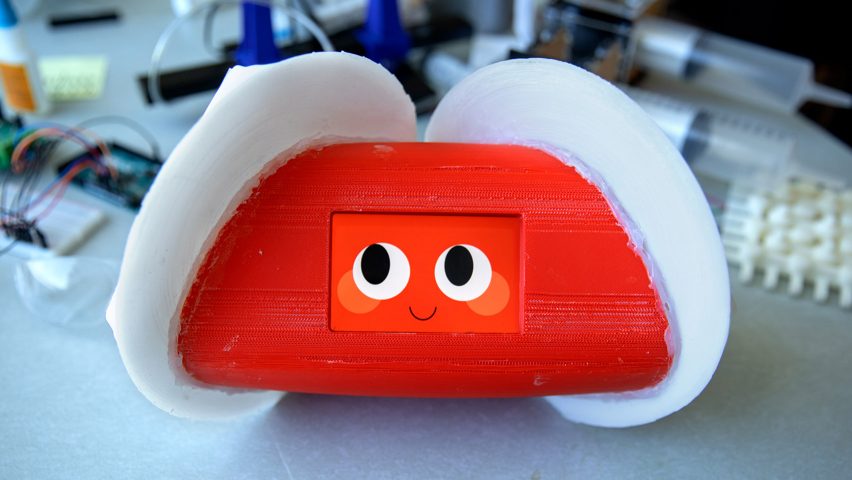

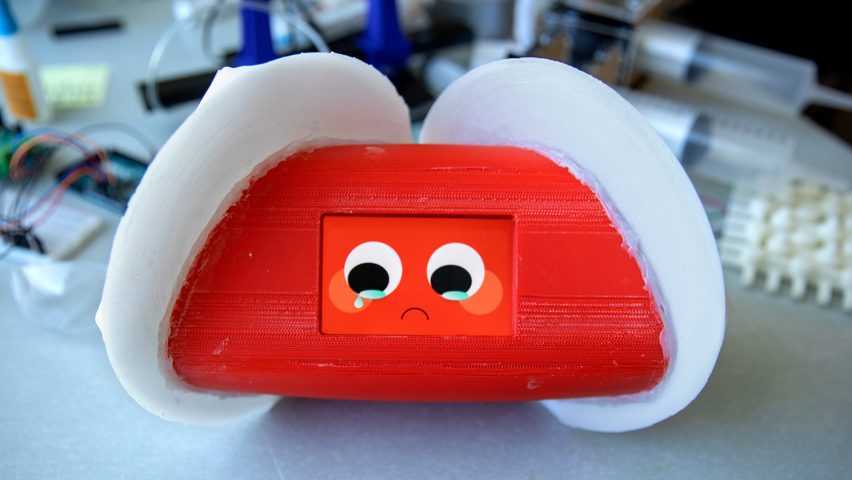

A product of the university's Human-Robot Collaboration and Companionship Lab, the prototype robot has a soft outer "skin" that exhibits different textures depending on what it wants to tell the person interacting with it.

The engineers were inspired to create the skin based on their observations of how humans interact with other animal species, reading visual and haptic cues for information on the creature's mental state.

So when the fur on a dog's back raises, for example, we infer that they are feeling threatened or excited.

"We have a lot of interesting relationships with other species," said Guy Hoffman, assistant professor at Cornell's Sibley School of Mechanical and Aerospace Engineering, who led the students at the Human-Robot Collaboration and Companionship Lab.

"Robots could be thought of as one of those 'other species', not trying to copy what we do but interacting with us with their own language, tapping into our own instincts."

The team engineered the robot's stretchy skin to change texture when air is pumped into elastic cavities embedded under the surface of the material.

The expressive skin is made up of multiple sheets of these air pockets, allowing many textures to be generated in various patterns. To mimic animal behaviours, the engineers chose goosebumps and spikes for the prototype.

Varying the frequency or level of air pressure produces different effects. A video produced by Cornell University shows the robot pulsing with exaggerated goosebumps when "happy" and shooting out spikes at a fast rhythm when "angry". A slower, more meandering pattern emerges when the robot is "sad".

The message is emphasised by a screen showing the corresponding expression on an animated face.

The Human-Robot Collaboration and Companionship Lab focuses on social robots like Aibo and Paro whose primary purpose is to interact with humans. The team wanted to expand the ways in which these machines can communicate with the people around them.

"At the moment, most social robots express [their] internal state only by using facial expressions and gestures," the team wrote in a paper presented in April at the International Conference on Soft Robotics in Livorno, Italy.

"We believe that the integration of a texture-changing skin, combining both haptic and visual modalities, can thus significantly enhance the expressive spectrum of robots for social interaction."

Their research also has relevance for the wider field of robotics, where the psychology of users is a key consideration, particularly as artificial intelligences appear in more areas of people's lives.

An example is self-driving cars: getting humans to trust one enough to ride in it is a key challenge, as BMW board director Peter Schwarzenbauer discussed with Dezeen last year.

Cornell University's texture-changing robot project combines current psychological research with another scientific field seeing rapid progress — active materials. These are materials that change shape on demand, usually in response to temperature or pressure changes, like MIT's Liquid Printed Pneumatics and Active Auxetic material.

Hoffman and his team used an active material design developed by an MAE colleague, Rob Shepherd, who heads the school's Organic Robotics Lab.

Project credits:

Design: Yuhan Hu, Zhengnan Zhao, Abheek Vimal and Guy Hoffman